Each organization has its processes and methodologies on how to vet critical decisions among its stakeholders. The criteria used for decision making are typically well defined and understood. Crisply allowing a good comparison of the options or trade space to have the decision be more apparent to the stakeholders requires some planning and creativity. This blog focuses on such techniques that may be useful to apply in Clinical Studies to make for a more engaging and efficient decision-making process. A brief introduction on the techniques is discussed and a relevant example on application of these techniques follows.

Usually when a key decision is warranted, multiple factors require consideration to make the appropriate decision. The stakeholders usually know what these factors are, but may not capture it formally and really assess if one factor is more important than another.

Technique 1: when a key decision is required, write down the factors that can affect the decision. Ideally these factors are listed in priority order, i.e., the near-term cost impact is the #1 priority, the impact to the organization is priority #2, and the impact to the timeline is priority #3.

If the decision will involve evaluating multiple factors, what is a good way to make that comparison? Are the factors quantifiable? Are the factors more qualitative? Having an advanced understanding of how the factors will be “scored” will make assembling the trade space matrix easier.

Technique 2: determine the factors that affect decision and assess which ones warrant a quantifiable comparison and which ones are qualitatively compared. Have these factors jotted down when starting the decision-making process and assign priorities to these factors. Ideally assigning weighting criteria to the factors is a great way to assign priorities. Sometimes the weighting criteria pre-biases the outcome. If there is an opportunity to meet with the stakeholders in advance to collectively align on the weighting criteria, that is an ideal outcome.

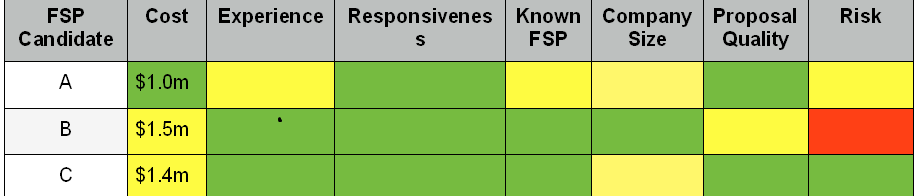

Implementation Process: the optimal means to make the trade space matrix comparison is use of a tabular format. The options being compared to are in the first column, the factors to be assessed for each option are on subsequent columns - each unique factor should have its own column. A preferred approach is to use color coding schemes to make the comparison of the factors for each option easier. For the stakeholders reviewing this format, the decision to be made could just fall out obviously when using this approach. A logical color scheme is Red, Yellow, and Green for assessing factors for each option. When looking across the table of options and factors, if one option has all factors scored as “Green”, that is likely the obvious choice in the decision process. The same methodology can be tailored to use a quantifiable method. Perhaps there are scores assigned to a Red/Yellow/Green category and the score for that factor is multiplied by its weighting factor. In this approach each option has a weighted score when tallied clearly quantifiably identifies the decision to be made.

With the techniques and approach cited above, here is an example of its application in a Clinical Study.

A competitive Request for Proposal (RFP) has been issued to three prospective Functional Service Providers: A, B, and C. The criteria that the sponsor is using to down select the preferred supplier are:

Cost: Total Cost for Full Scope of Work

Experience: Knowledge of the Therapeutic Area of Study

Responsiveness: Agility to Get Patient Enrollment Population

Known FSP: Prior History Working with FSP vendor

Company Size: Size of FSP Vendor to Handle Workload

Proposal Quality: Quality and Completeness of Proposal Response

Performance Risk: Risk of FSP Vendor to Perform to Required Objectives

The evaluation team has completed their assessment. This can be done collectively as a group or individuals could complete their own assessment and convene to share their inputs. By having each individual complete their assessment, glaring disparities on a particular category can result in a good discussion to surface specific observations. A collective assessment can then be captured after those discussions which hopefully leads to a collective assessment that is aligned. For this example, the assessment is qualitative with the expectation that alignment can be reached after meeting as a team. In a quantitative assessment, averaging results from each assessor could be the basis for decision-making. As observed from the table above, if cost is most important, clearly Candidate A is the logical choice. If assessing other criteria, this candidate is not known to the decision makers. How important is that criteria? Another takeaway can be why did Candidate B have an inferior proposal which also resulted in the risk score assessed as Red? Are they too busy to prioritize this study? Clearly that would be a large concern. Another outcome could be for a bit more cost, a safer option for down selection could be Candidate C who offered a good proposal, is a known organization, but are small.

This approach of parsing a complex decision into smaller criteria allows for a solid understanding of what is the key driver(s). This in turn allows for a more meaningful team collaboration to make the decision which best fits the organization. This methodology is flexible to many decisions aside from this example. Future blogs will offer other examples to tailor this approach.